Gpu compare12/2/2023

IBM presented a new analog AI chip in August that mimics the human brain and can perform up to 14 times more efficiently. D-Matrix claims its solution can reduce costs by 10 to 20 times – and in some cases as much as 60 times.īeyond d-Matrix’s technology, other players are beginning to emerge in the race to outpace Nvidia’s H100. The solution, this firm claims, is its specialized DIMC architecture that mitigates many of the issues in GPUs. or visit one of the following popular comparisons (in the last day) below. This means cooling demands are then heightened. Compare the performance of up to 5 different CPUs. Given the widespread issues AMD users are facing with 5000 series GPUs (blue/black screens etc.), it is unlikely that AMD would have posed a. Moving data out of DRAM also means higher energy consumption alongside reduced throughput and added latency. Nvidia’s 3080 GPU offers once in a decade price/performance improvements: a 3080 offers 50 more effective speed than a 2080 at the same MSRP. The 3050 also includes an encoder (NVENC) for sharper images and. DLSS technology uses the 3050’s tensor cores to scale up resolutions whilst maintaining high frame rates and without losing significant image quality. Can I run it What games can I run Rate My PC Statistics.

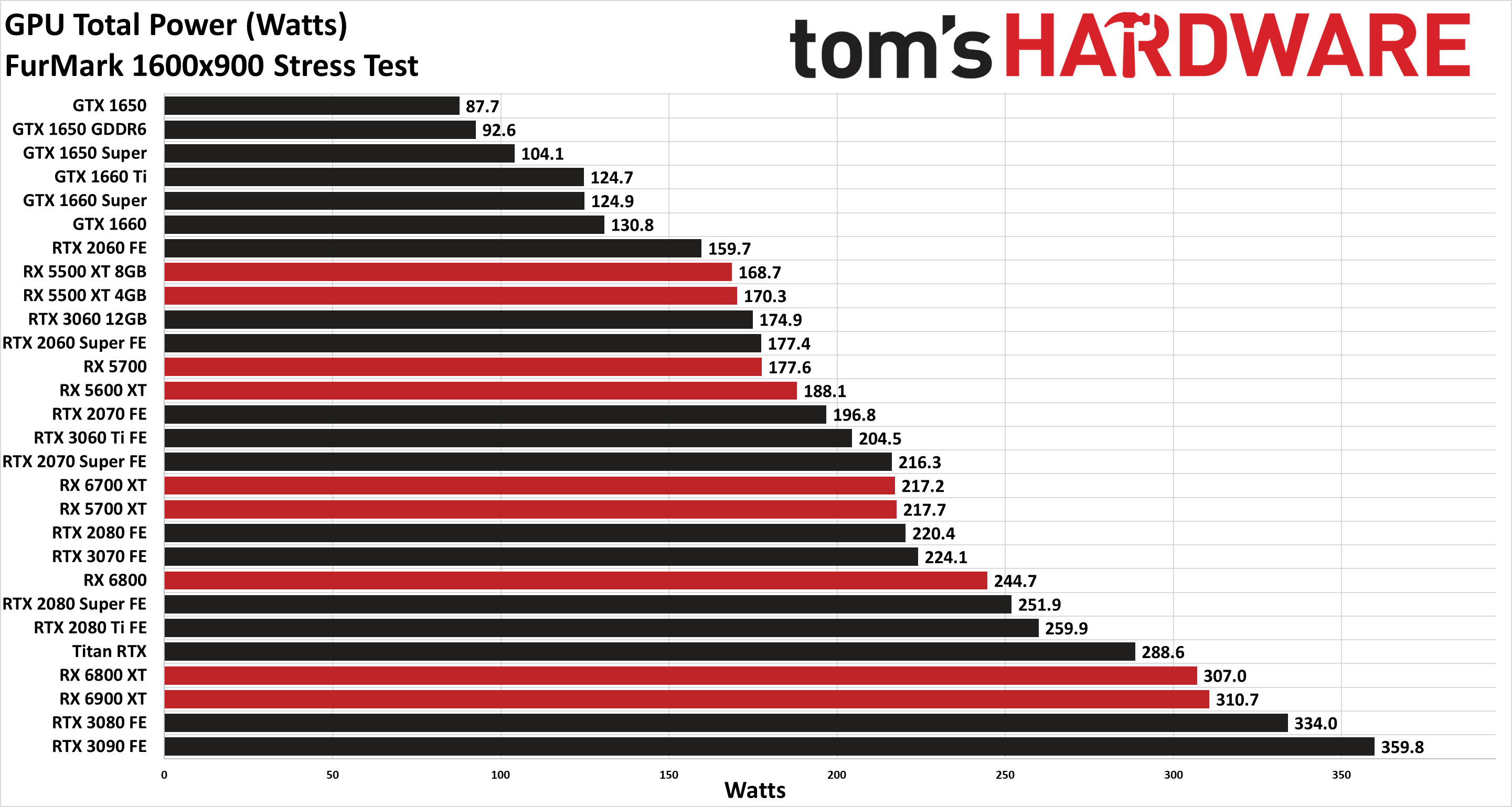

Compare processors Processors ranking Intel CPU ranking AMD CPU ranking CPU price to performance Games. This is because the bandwidth demands of running AI inference lead to GPUs spending a lot of time idle, waiting for data to come in from DRAM. The 3050 features 2560 CUDA cores, a boost clock frequency of 1.78 GHz, 8 GB of the latest GDDR6 memory and NVIDIA’s DLSS. Compare graphics cards Graphics card ranking NVIDIA GPU ranking AMD GPU ranking GPU price to performance Processors. The 1660 range of cards sit in the sweet spot. The 1660 Super has 14 Gbps GDDR6 (versus 12Gbps GDDR6 for the 1660 Ti and 8Gbps GDDR5 for the 1660). The GTX 1660 Super has a launch price of just 230 USD with comparable performance to the 2 Ti. But GPUs aren’t optimized for LLM inference, according to d-Matrix, and too many GPUs are needed to handle AI workloads, leading to excessive energy consumption. Device: 10DE 21C4 Model: NVIDIA GeForce GTX 1660 SUPER. The leading components are GPUs and, more specifically, Nvidia’s A100 and newer H100 units. With generative AI rapidly expanding, the industry is locked in a race to build increasingly powerful hardware to power future generations of the technology. These unique cards can produce up to 20 times high throughput for generative inference on large language models (LLMS), up to 20 times lower inference latency for LLMs, and up to 30 times cost savings when compared with traditional GPUs.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed